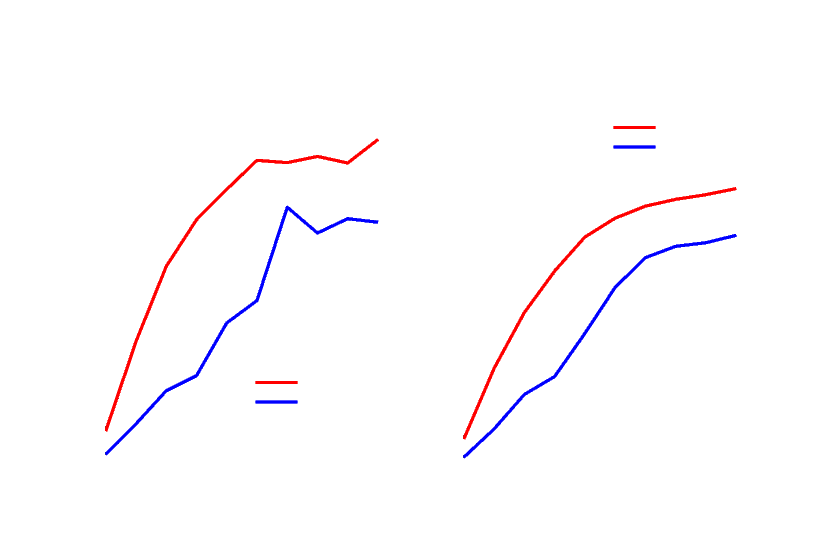

50% to 40% CPU on Linux, 15% to 14% CPU on Windows, and 24% to 18% CPU on 28 core ARM Linux! Another nice side-effect of the change is that the number of exceptions per second, as shown in the graph below, drastically dropped, which is always a nice thing to see. Not throwing exceptions resulted in reduced CPU usage in our connection close benchmarks. Throwing exceptions can be expensive and dotnet/aspnetcore#38094 identified an area in Kestrel’s Socket transport where we could avoid throwing an exception at one layer during connection closure. Removing dispatching on Windows gave another ~18% RPS increase resulting in a total of ~27% RPS increase, going from 800k RPS to 1.1m RPS. While this change by itself resulted in perf improvements, such as an ~11% increase in RPS for our JSON platform benchmark, it also allowed us to remove a thread pool dispatch in Kestrel in dotnet/aspnetcore#43449 that was previously there to get off the IO thread. Second, there is the potential for a small increase in CPU usage when a machine has low load due to work stealing needing to search more queues to find work.Ī significant change (windows specific) came from dotnet/runtime#64834, which switched the Windows IO pool to use a managed implementation. First off, strict FIFO ordering of work items to the global thread queue is no longer guaranteed because there are now multiple queues being read from. There are a couple tradeoffs that were made to get this perf increase. On our 80-core Ampere machine, the plaintext platform benchmark improved 514%, 2.4m RPS to 14.6m RPS, and the JSON platform improved 311%, 270k RPS to 1.1m RPS! Partitioning enables cores to operate on their own queues, which helps reduce contention and improve scalability with large core count machines. dotnet/runtime#69386 and dotnet/aspnetcore#42237 partitioned the global thread pool queue and the memory pool used by socket connections respectively. NET 7, we identified areas where many core machines weren’t scaling very well and fixed them to bring massive performance gains. General serverĪmpere machines are ARM based, have many cores, and are being used as servers in cloud environments due to their lower power consumption and parity performance with 圆4 machines. Although we only display the last few months of data so that the page will load in a reasonable amount of time. There are also some cases where I will reference our end-to-end benchmarks which are public at. In those cases I’ll either reference numbers that are gotten by running the benchmarks in the repository, or I’ll provide a simplified example to showcase what the improvement is doing.

Some of the benchmarks test internal types, and a self-contained benchmark cannot be written. Run dotnet run -c Release and enter the number of the benchmark you want to run when prompted.Add the benchmarking code snippet below that you want to run.Change Program.cs to var summary = BenchmarkSwitcher.FromAssembly(typeof(Program).Assembly).Run().Add a Nuget reference to BenchmarkDotnet ( dotnet add package BenchmarkDotnet) version 0.13.2+.Create a new console app ( dotnet new console).We will use BenchmarkDotNet for most of the examples in this blog post. Many of those improvements either indirectly or directly improve the performance of ASP.NET Core as well.

And, of course, it continues to be inspired by Performance Improvements in. This is a continuation of last year’s post on Performance improvements in ASP.NET Core 6. This blog post highlights some of the performance improvements in ASP.NET Core 7. NET apps are faster and use less resources. NET team and community contributors spend time making performance improvements, so.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed